Newsletter of the ACM Special Interest Group on Genetic and Evolutionary Computation

Editorial

Welcome to the first (Spring) 2026 issue of the SIGEvolution newsletter! We open by celebrating the 2025 ACM SIGEVO Outstanding Contribution Awardees: Prof. Mengjie Zhang and Frank Neumann, who kindly shared insights on their contributions and perspectives on evolutionary computation. We follow with a report of a recent PhD dissertation, whose author also contributed the cover image. In our final contribution, the authors reflect on their article receiving the 2025 ACM SIGEVO Impact Award.

We conclude with announcements and calls for submissions. Remember to contact us if you’d like to contribute or have suggestions for future newsletter issues.

Gabriela Ochoa (Editor)

About the Cover

The cover image is a contribution from Camilo Chacon-Sartori, generated using a web-based implementation of Search Trajectory Networks (STNWeb) he developed during his doctoral research (see our feature on his PhD later in this issue). STNWeb enables the generation of informative visualizations to support the comparison of stochastic optimization algorithms. The STNWeb 3D image on the cover uses fitness as the 3rd dimension in a network layout. It illustrates multiple search trajectories of three algorithms: Hill Climber (blue), Simulated Annealing (pink), and Genetic Algorithm (green). Yellow squares represent the initial solutions, while black cones denote the final endpoints. The red node highlights the global optimum—the best fitness value encountered—which was reached by all three algorithms in this instance.

Table of Contents

The 2025 ACM SIGEVO Outstanding Contribution Awardees

The SIGEVO Outstanding Contribution Award recognizes remarkable contributions to Evolutionary Computation (EC) when evaluated over a sustained period of at least 15 years. These contributions can include technical innovations, publications, leadership, teaching, mentoring, and service to the EC community.

In 2025, two accomplished members of our community received this recognition: Mengjie Zhang and Frank Neumann. To celebrate these distinctions, they kindly answered our questions reflecting on their contributions and views on evolutionary computation, as well as their advice to young researchers in the field.

Prof. Mengjie Zhang

Centre for Data Science and Artificial Intelligence, Victoria University of Wellington, New Zealand

Q1: Which are the service, editorial, leadership, mentoring or other contributions you are most proud of?

I am really proud of establishing and serving as a leader of the Evolutionary Computation and Machine Learning Research Group and the Centre for Data Science and Artificial Intelligence at Victoria University of Wellington, New Zealand, which have become a centre for mentoring PhD students and early-career researchers in evolutionary computation and machine learning. I am very proud of seeing that many of those graduates and early career people such as Bing Xue (NZ), Yi Mei (NZ), Qi Chen (NZ), Andrew Lensen (NZ), Yanan Sun (China), Ying Bi (China), Su Ngueyn (Australia), Mahdi Setayesh (America) and Urvesh Bhowan (Europe) have been playing leadership roles in our Evolutionary Computation Community, particularly in Genetic Programming and Evolutionary Machine Learning and Optimisation, as well as generating real-world impact to economy, environment, health outcome, and social aspects.

I am also very pleased to have the opportunity to provide direct services to our evolutionary computation community, including as a chair for five different tracks (GP, EML, SI, BIO, RWA) and four times as a Tutorial Chair for ACM GECCO, a chair for three IEEE CIS technical committees (ECTC, ETTC, and ISATC), a General Chair for CEC 2019, and a co-chair for EvoIASP and EML at EvoStar many times. I have also served as an Associate Editor for most of our major EC journals, including ECJ, TEVC, GPEM, TELO, and TCYB.

Q2: Which are your most significant technical contributions to Evolutionary Computation (EC)?

My main research areas have been genetic programming (GP) and evolutionary machine learning and optimisation. My team has developed several representations, operators, search mechanisms, and program complexity measures for GP for classification and symbolic regression (see my GP Bibliography entries maintained by Bill Langdon). We also introduced GP-based deep learning for image classification, and generated the very first work of a human-interpretable “deep” model that integrates region detection, low-level and high-level feature construction, and classification [1-2]. My team has also developed GP methods with model complexity measures for regression and classification with unbalanced data and missing values [3-6].

In evolutionary machine learning [7-8], my research has been focused on Evolutionary Feature Selection and Dimensionality Reduction [9-11], Evolutionary Deep Learning [12-13], multi-modality learning [14], transfer learning [15], and Explainable AI [16], which has been widely used for classification, regression, and image analysis. I have delivered keynote speeches at IEEE WCCI/CEC-2018 and 2024, SSCI 2022, and GECCO 2023 workshops.

In evolutionary optimisation, my research has focused on developing GP-Hyperheuristic methods for automated design of policies/dispatching-rules for scheduling, routing, resource allocation, supply chain, and other combinatorial optimisation problems, particularly under dynamic and uncertain environments, which traditional exact or (meta-)heuristic methods cannot easily do.

My team has been working on generating significant real-world impact. We have successfully developed EC/EML methods to solve real-world problems in primary industry, climate change, (bio-)medical/health, high-tech and high-value manufacturing, resulting in impact in the NZ economy, environment, health outcome, and society/public policies, via collaborations with crown research institutes, industry/government agencies, and end-users. I am seeking collaborations with our European friends on jointly applying for Horizon Europe programmes/projects (NZ is an official associate member of Horizon EU).

Q3: What are the current open problems, or topics where you think there are opportunities for substantial contributions in our field?

In GP, I believe that there are still a number of open questions for developing further contributions. One is how to develop efficient hardware to support running GP for solving large problems, just like GPU resources for running neural network models. FPGAs could be a potential direction to try. Another one is to develop novel GP representations and corresponding operators for solving large-scale problems. A harder one could be to significantly reduce the expensive evaluations by much more smartly reusing the evolved materials/codes.

For Evolutionary machine learning and optimisation, how to effectively integrate and interact with machine learning methods, and also the recent generative AI methods or models, could be a potential direction. One could also make a big effort to solve complex real-world problems (not only benchmark problems or on top of those common benchmark problems), which could increase the visibility of our EC methods in the mainstream AI community.

Q4: What advice do you have for the younger generation of researchers in the field?

For those who have a career goal of becoming an academic at a university, my first advice is to focus on one or two fundamental research areas and work hard on novel or innovative ideas and methods to develop your expertise, reputation, and leadership for several years. Meanwhile, you keep another two or three areas for collaborations and grant applications. A second piece of advice is to keep engaging and interacting with other people in your field to learn different aspects from them and keep your mind open. Unless you are working on pure theory or theoretical aspects, I strongly suggest that you use both benchmarks and real-world problems to test and verify your algorithms, which could be potentially helpful for your career

References

[1] Ying Bi, Bing Xue, Pablo Mesejo, Stefano Cagnoni, Mengjie Zhang. “A Survey on Evolutionary Computation for Computer Vision and Image Analysis: Past, Present, and Future Trends”. IEEE Transactions on Evolutionary Computation. Vol. 27, Issue 1 2023. pp. 5 – 25. DOI: 10.1109/TEVC.2022.3220747.

[2] Ying Bi, Bing Xue, Mengjie Zhang. “Genetic Progamming for Image Classification: A Feature Learning Approach”. Springer Book Series on Studies in Adaptation, Learning, and Optimization. Springer. 8 February 2021. ISBN: 978-3-030-65926-4. Pages: 258 + xxviii.

[3] Qi Chen, Bing Xue, Mengjie Zhang. “Structural Risk Minimisation-Driven Genetic Programming for Enhancing Generalisation in Symbolic Regression”. IEEE Transactions on Evolutionary Computation. Vol. 23, Issue 4. 2019. pp. 703-717. DOI: 10.1109/TEVC.2018.2881392.

[4] Christian Raymond, Qi Chen, Bing Xue and Mengjie Zhang. “Learning Symbolic Model-Agnostic Loss Functions via Meta-Learning”. IEEE Transactions on Pattern Analysis and Machine Intelligence. Vol. 45, Issue 2023.11. 2023, pp. pp. 13699-13714. DOI: 10.1109/TPAMI.2023.3294394.

[5] Urvesh Bhowan, Mark Johnston, Mengjie Zhang, Xin Yao. “Evolving Diverse Ensembles using Genetic Programming for Classification with Unbalanced Data”. IEEE Transactions on Evolutionary Computation. Vol. 17, No. 3. 2013. pp. 368-386. DOI: 10.1109/TEVC.2012.2199119.

[6] Wenbin Pei, Bing Xue, Mengjie Zhang, Lin Shang, Xin Yao, Qiang Zhang. “A Survey on Unbalanced Classification: How Can Evolutionary Computation Help?”. IEEE Transactions on Evolutionary Computation. 15pp. DOI: 10.1109/TEVC.2023.3257230.

[7] Harith Al-Sahaf, Ying Bi, Qi Chen, Andrew Lensen, Yi Mei, Yanan Sun, Binh Tran, Bing Xue*, and Mengjie Zhang*. “A Survey on Evolutionary Machine Learning”. Journal of the Royal Society of New Zealand. 2019. pp. 205-228. DOI: 10.1080/03036758.2019.1609052.

[8] Wolfgang Banzhaf, Penousal Machado, Mengjie Zhang. “Handbook of Evolutionary Machine Learning”. Springer Book Series on Genetic and Evolutionary Computation. DOI: https://doi.org/10.1007/978-981-99-3814-8. Hardcover ISBN: 978-981-99-3813-1. Published: 02 November 2023. Pages: 768 + XVI.

[9] Bing Xue, Mengjie Zhang, Will Browne, Xin Yao. “A Survey on Evolutionary Computation Approaches to Feature Selection”. IEEE Transaction on Evolutionary Computation. Vol. 20, Issue 4, 2016. pp. 606-626. DOI: 10.1109/TEVC.2015.2504420

[10] Ruwang Jiao, Bach Nguyen, Bing Xue, Mengjie Zhang. “A Survey on Evolutionary Multiobjective Feature Selection in Classification: Approaches, Applications, and Challenges”. IEEE Transactions on Evolutionary Computation. Volume: 28, Issue: 4. 2024. pp. 1156 – 1176. DOI: 10.1109/TEVC.2023.3292527.

[11] Bach Hoai Nguyen, BingXue, Mengjie Zhang. “A survey on swarm intelligence approaches to feature selection in data mining”. Swarm and Evolutionary Computation. Vol. 54, May 2020, 100663 (pp. 1-16). DOI: 10.1016/j.swevo.2020.100663.

[12] Nan Li, Lianbo Ma, Guo Yu, Bing Xue, Mengjie Zhang, Yaochu Jin. “Survey on Evolutionary Deep Learning: Principles, Algorithms, Applications and Open Issue”. ACM Computing Surveys. Vol. 56, Issue 2, 2023, pp. 41(1-34). DOI: 10.1145/3603704.

[13]Yuqiao Liu, Yanan Sun,Bing Xue, Mengjie Zhang, Gary Yen, Kay Chen Tan. “A Survey on Evolutionary Neural Architecture Search”. IEEE Transactions on Neural Networks and Learning Systems. Vol. 34, Issue 2, 2023. pp. 550 – 570 DOI: 10.1109/TNNLS.2021.3100554.

[14] Bach Hoai Nguyen, Bing Xue, Peter Andreae and Mengjie Zhang. “A Hybrid Evolutionary Computation Approach to Inducing Transfer Classifiers for Domain Adaptation”. IEEE Transactions on Cybnertics. Vol. 51, Issue 12. 2021. pp. 6319 – 6332 DOI: 10.1109/TCYB.2020.2980815.

[15] Zhicheng Wu, Bing Xue, Mengjie Zhang. “Multitree Genetic Programming for Multimodal Learning in Multimodal Medical Image Classification”. IEEE Transactions on Evolutionary Computation. 2025. DOI: 10.1109/TEVC.2025.3619532

[16] Yi Mei, Qi Chen, Andrew Lensen, Bing Xue, Mengjie Zhang. “Explainable Artificial Intelligence by Genetic Programming: A Survey”. IEEE Transactions on Evolutionary Computation. Vol. 27, Issue 3 2023. pp. 621 – 641. DOI: 10.1109/TEVC.2022.3225509.

Prof. Frank Neumann

School of Computer Science and Information Technology, Adelaide University, Australia

Q1: Which are the service, editorial, leadership, mentoring or other contributions you are most proud of?

It is most rewarding for me to see former students and postdocs progressing their careers, and I regard this as most important. Over the years, I have had many students and postdocs from a wide range of countries join me in Adelaide for some time. Many stayed in Australia, and others continued their journey overseas. It is always great to catch up with some of them at GECCO and other conferences.

Q2: Which are your most significant technical contributions to Evolutionary Computation (EC)?

This is most probably something for other people to judge. I really liked working on runtime analysis for combinatorial and multi-objective optimisation. In recent years, I collaborated with my long-time colleague Carsten Witt on Fast Pareto Optimisation using Sliding Window selection. Carsten and I published a couple of papers during the last few years on this topic that I really like. The main reason is that it takes theoretical insights from runtime analysis to improve evolutionary multi-objective optimisation for constrained single-objective problems with a focus on monotone submodular optimisation [1,2]. The results make MOEAs highly effective for such problems and allow us to significantly speed up the algorithms and tackle much larger problem instances than prior to this development.

Q3: What are the current open problems, or topics where you think there are opportunities for substantial contributions in our field?

I think there is still a strong need to have evolutionary computation as an easy-to-use tool that doesn’t require too much understanding of how to use it. There have been advances in this area and in the field of algorithm selection and automated machine learning. Making EC more accessible seems to be still the crucial part for wider acceptance.

Q4: What advice do you have for the younger generation of researchers in the field?

Follow the research where you have the strongest passion and get involved in the research community through collaborations and research stays. You can learn a lot from working with people from different areas, and combining expertise can be very powerful for innovative research. There are a lot of opportunities (also in terms of funding) for early-career researchers to build their network, and they will greatly benefit from this throughout their careers.

References

[1] Frank Neumann, Carsten Witt (2025)Runtime Analysis of Single- and Multiobjective Evolutionary Algorithms for Chance-Constrained Optimization Problems with Normally Distributed Random Variables. Evol. Comput. 33(2): 191-214. https://doi.org/10.1162/evco_a_00355

[2] Frank Neumann, Carsten Witt (2026) Fast Pareto Optimization Using Sliding Window Selection for Problems with Deterministic and Stochastic Constraints. Evol. Comput. https://doi.org/10.1162/EVCO.a.368

PhD Dissertation Report

Improving Optimization Algorithms with Machine Learning and Visualization

Camilo Chacón Sartori

Universitat Autònoma de Barcelona (UAB) & Artificial Intelligence Research Institute (IIIA-CSIC)

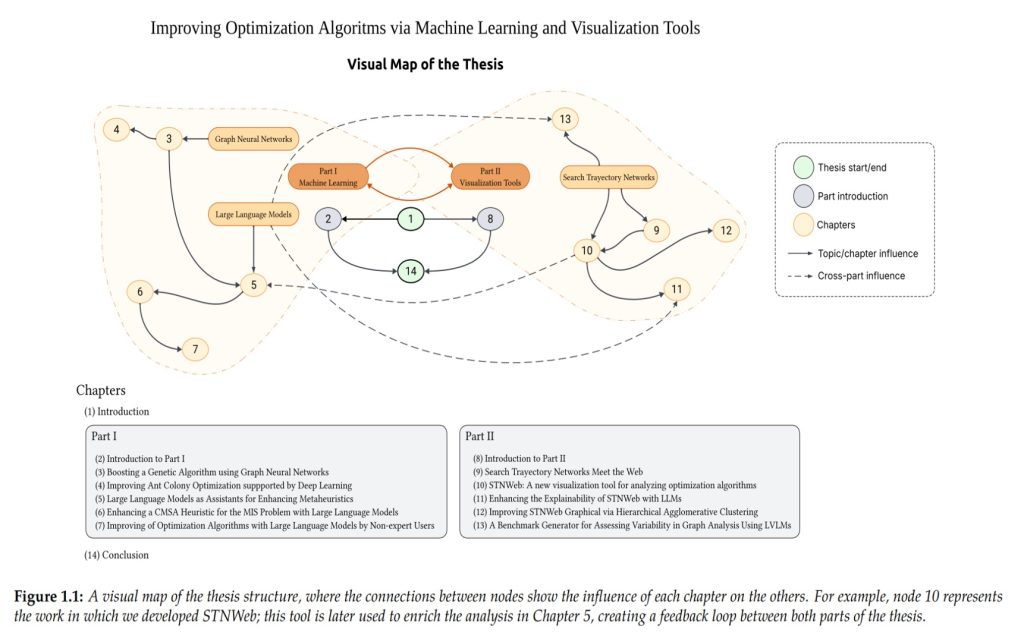

This dissertation explores how optimization algorithms can become both more effective and more interpretable. It focuses on two long-standing limitations of metaheuristics: first, their frequent lack of awareness of the specific structure of the problem they are solving; and second, their opacity, which makes it difficult to understand why they behave well in some cases and poorly in others.

To address these challenges, the thesis develops two connected research lines. The first investigates how Machine Learning can improve the internal behavior of optimization algorithms. The second line studies how visualization tools can make their search dynamics easier to inspect, compare, and explain. Together, both directions support a broader goal: advancing toward an optimization approach that is not only more effective but also easier to understand

Part I: Improving Metaheuristics with Machine Learning

The first part of the dissertation studies how learning-based models can guide the search process of general-purpose optimization algorithms.

Graph Neural Networks for Search Guidance. The initial research focused on Graph Neural Networks (GNNs), which are especially suitable for combinatorial optimization problems defined on graphs. In this line, the thesis presents hybrid approaches where GNNs learn structural signals from problem instances and then use that information to bias the search of algorithms such as BRKGA and Ant Colony Optimization. These ideas were applied to problems, including Multi-Hop Influence Maximization and Target Set Selection. The results showed that GNN-based guidance can help optimization algorithms focus on more promising regions of the search space and achieve highly competitive performance.

Large Language Models as Pattern Recognition Engines. The dissertation then shifts toward Large Language Models (LLMs), but not in their usual role as text generators. Instead, it explores how they can be used as flexible engines for identifying patterns in numerical and structural data. This idea appears in OptiPatterns, a methodology where an LLM analyzes tabular information about graph features and solution distributions to infer heuristic guidance for optimization. The results suggest that LLMs can sometimes match or even outperform more specialized deep learning approaches, while being less computationally demanding and easier to adapt.

LLMs for Algorithm Augmentation. A further step in this direction is the idea of algorithm augmentation. Rather than asking an LLM to generate an optimization algorithm from scratch, the thesis studies how these models can improve existing expert-written code. One case study analyzes a C++ implementation of CMSA for the Maximum Independent Set problem, where an LLM proposed new heuristics for the construction phase that outperformed the original version. A broader benchmark extended this idea to ten optimization algorithms for the Traveling Salesman Problem, suggesting that LLMs may lower the barrier for improving sophisticated algorithmic systems, even for non-expert users.

Part II: Making Optimization More Interpretable

The second part of the dissertation focuses on interpretability. Optimization algorithms are often treated as black boxes, especially when their internal trajectories are difficult to observe. To address this, the thesis contributes to the development of STNWeb (https://www.stn-analytics.com), a web-based platform for generating and analyzing Search Trajectory Networks (STNs).

STNs represent the search process itself: nodes correspond to solutions or regions of the search space, and edges capture transitions made by the algorithm. By visualizing these trajectories, STNWeb makes it easier to study behaviors such as convergence, stagnation, cycling, or exploration patterns.

Extending STNWeb. The platform is extended in several directions. One contribution introduces a clustering-based partitioning strategy that improves the readability of trajectory visualizations in continuous and high-dimensional spaces. Another explores the use of LLMs to automatically generate natural-language explanations from STN features, making this kind of analysis more accessible beyond specialist audiences.

Benchmarking Multimodal Models. The dissertation also connects this line of work with the evaluation of multimodal AI systems through VisGraphVar, a benchmark designed to test how well large vision-language models understand graph-based visual tasks. This part of the research helps clarify the current limits of automated visual reasoning over graph structures.

Main Contribution

Overall, the thesis proposes a unified framework for AI-assisted optimization and explainable optimization. Its central claim is that machine learning can help optimization algorithms become more context-aware, while visualization and language-based explanation can make their behavior easier to understand.

In this sense, the work is not only about improving algorithmic performance. It is also about improving our ability to inspect, reason about, and trust the systems we build, without ignoring the fact that many algorithms are already embedded in legacy systems and that AI is often more useful as a force multiplier than as a replacement.

Link: Full Dissertation (open access)

About the Author

Camilo Chacón Sartori is a postdoctoral researcher at the Catalan Institute of Nanoscience and Nanotechnology (ICN2) in Barcelona, where he is part of the Theoretical and Computational Nanoscience Group. His work lies at the intersection of optimization, artificial intelligence, and the philosophy of technology. His current research focuses on AI for Science, particularly computational optimization using evolutionary computing techniques. Alongside his research in machine learning and metaheuristics, he has also developed a growing line of work in the philosophy of technology, with a particular interest in error, fallibility, and the conceptual implications of AI-generated systems.

The 2025 ACM GECCO Impact Award

General Program Synthesis Benchmark Suite

Thomas Helmuth and Lee Spector

In 2012, researchers in the genetic programming community realized that the field was being held back by reliance on toy benchmark problems [1]. By 2015, some work had been done to improve benchmarking in specialized areas such as symbolic regression [2]. However, no standardized benchmark problems had yet been developed for general program synthesis, the task of automatically generating programs that are similar in many respects to the kinds of programs that humans usually write.

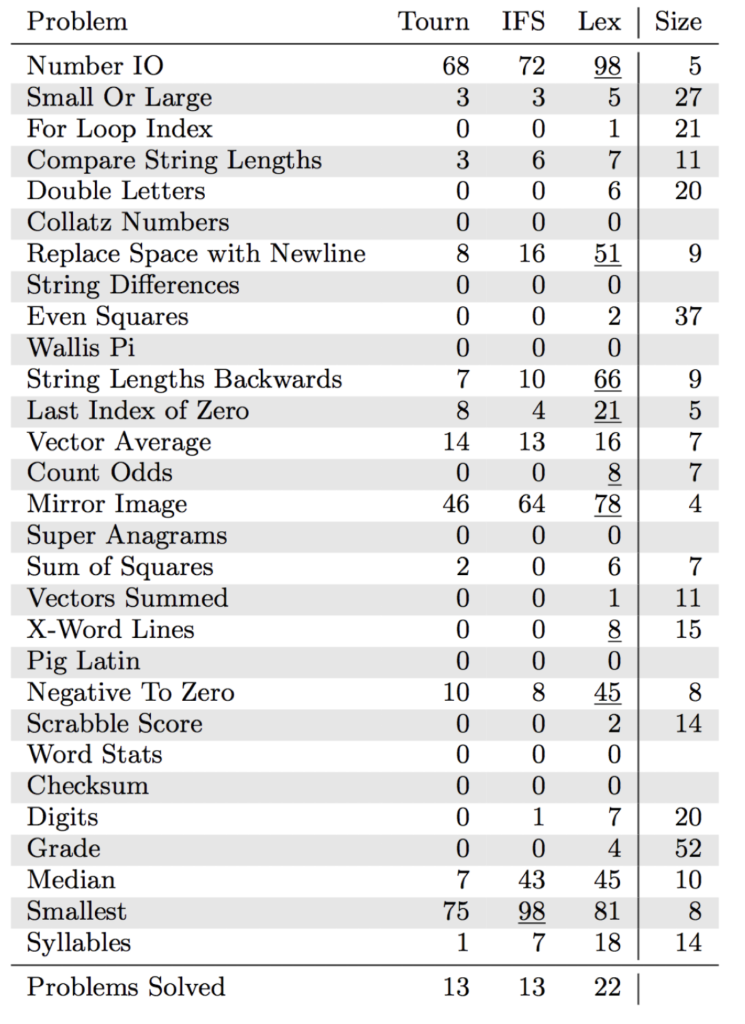

At GECCO 2015, we published the paper General program synthesis benchmark suite, which includes 29 problems taken from introductory programming textbooks [3]. This paper was awarded the ACM SIGEVO 10-years Impact Award at GECCO 2025. Here we briefly reflect on the paper, its impact, and our thoughts about benchmarking program synthesis systems moving forward.

The benchmark problems provided in our 2015 paper require solution programs to manipulate eight different data types and use large, general-purpose instruction sets that include conditional execution and looping or recursion. Each problem is defined as a supervised learning problem, with specified inputs and corresponding outputs. As an example, the Syllables problem asks for a program that, given a string, returns the number of vowels in the string.

Our suite of problems, now referred to as PSB1, was accompanied by recommendations for the design of experiments that could use the benchmarks to fairly compare alternative program synthesis methodologies. The paper also included an example usage, experimentally comparing three different parent selection methods (tournament selection, implicit fitness sharing, and lexicase selection) using the PushGP genetic programming system. The results from this experiment are below.

Since 2015, over 200 studies have been published that cite the original PSB1 paper, many of which use the problems to benchmark genetic programming and other program synthesis systems. Sobania, Schweim, and Rothlauf surveyed the use of evolutionary algorithms for program synthesis by looking at all studies that made use of PSB1 [4]. They found that stack-based genetic programming (primarily PushGP), grammar-guided genetic programming, and linear genetic programming were the main types of genetic programming benchmarked with PSB1, and that papers using PSB1 had been published in a variety of conferences and journals. Below is a list of genetic programming systems and techniques that have been benchmarked using PSB1:

- lexicase parent selection (and many variants)

- grammar design patterns for grammatical evolution

- domain knowledge integration in grammatical evolution

- novelty selection

- generalization of genetic programming solutions

- higher-order typed genetic programming

- phylogeny-informed fitness estimation

- program synthesis with variational autoencoders

- code similarity and modularity metrics

In addition, a handful of non-genetic programming program synthesis techniques have used problems from PSB1.

So, what is the state of the field for program synthesis using genetic programming? We see improvement from 2015, when 22 out of 29 of the PSB1 problems were solved at least once out of 100 runs using PushGP, to today, when 26 of the problems have been [4]. Additionally, PushGP and grammar-guided genetic programming have dramatically improved their frequency of success on many of the PSB1 problems. To avoid over-specialization on this one set of problems, a second program synthesis benchmark suite, PSB2, was published in 2022 [5]. Genetic programming systems have solved some, but not all, of these new problems, and still struggle when given tasks that require larger, more complex programs to solve.

In the present, when large language models (LLMs) are dominating the conversation of program synthesis, how should we think about genetic programming for program synthesis, and of benchmarking program synthesis? Both in 2015 and today, we see two primary roles for genetic programming in program synthesis: to automate programming tasks based on examples, and to write programs that solve unsolved problems.

With these goals in mind, are there places where genetic programming may shine compared to LLM program generation? To begin, some solutions to unsolved problems may look so different from anything humans have ever written that LLMs are unlikely to be able to produce them. Additionally, genetic programming usually behaves entirely as a supervised learning system: it is only given examples of the desired behavior, instead of an English-language description of the system. It may therefore make sense to use genetic programming for problems in which it is more natural to give specifications based on inputs and outputs, rather than descriptions of the desired programs. We may also find opportunities to use LLMs and GP together, in ways that produce results that are superior to those of either approach when used individually.

While genetic programming has been successful in solving the introductory programming problems of PSB1, current systems have trouble scaling up to larger and more complex problems and programs. If we want genetic programming to build larger pieces of software, we will have to improve our methods for automatically generating the modular, hierarchical program structures that humans use to build software. We will also need benchmark problems that assess the ability of our systems to solve novel problems.

While PSB1 has provided useful benchmarking targets for program synthesis systems over the last decade or so, the research community will require updated benchmark problems and benchmarking practices for solving problems that are larger and more novel. These considerations should guide the future creation of program synthesis benchmark problems.

References

[1] James McDermott, David R. White, Sean Luke, Luca Manzoni, Mauro Castelli, Leonardo Vanneschi, Wojciech Jaskowski, Krzysztof Krawiec, Robin Harper, Kenneth De Jong, and Una-May O’Reilly. 2012. Genetic Programming Needs Better Benchmarks. In Proceedings of the 14th annual conference on Genetic and evolutionary computation (GECCO ’12). ACM.

[2] Miguel Nicolau, Alexandros Agapitos, Michael O’Neill and Anthony Brabazon. 2015. Guidelines for defining benchmark problems in Genetic Programming. In 2015 IEEE Congress on Evolutionary Computation (CEC). IEEE.

[3] Thomas Helmuth and Lee Spector. 2015. General Program Synthesis Benchmark Suite. In Proceedings of the 2015 Annual Conference on Genetic and Evolutionary Computation (GECCO ’15). ACM.

[4] Dominik Sobania, Dirk Schweim and Franz Rothlauf. 2023.A Comprehensive Survey on Program Synthesis With Evolutionary Algorithms. In IEEE Transactions on Evolutionary Computation. IEEE.

[5] Thomas Helmuth and Peter Kelly. 2021. PSB2: The Second Program Synthesis Benchmark Suite. In Proceedings of the Genetic and Evolutionary Computation Conference (GECCO ’21). ACM.

About the Authors

Thomas Helmuth is the Sacerdote Family Distinguished Associate Professor of Computer Science at Hamilton College in New York, USA. He received his Ph.D. from University of Massachusetts Amherst. His research focuses on algorithmic improvements to genetic programming for general program synthesis. Specific areas of study include genome/program representation, parent selection, and benchmarking program synthesis.

Lee Spector is the Class of 1993 Professor of Computer Science and the Chair of the Department of Computer Science at Amherst College, and an Adjunct Professor and member of the graduate faculty in the College of Information and Computer Sciences at the University of Massachusetts, Amherst. He works on a variety of topics at the intersections of computer science with cognitive science, philosophy, physics, evolutionary biology, and the arts. He formerly served as the Editor in Chief of Genetic Programming and Evolvable Machines and as a member of the ACM SIGEVO Executive Board

Journal Latest Issues

Genetic Programming and Evolvable Machines (GPEM)

Editor-in-Chief: Leonardo Trujillo

June, 2026

ACM Transactions on Evolutionary Learning and Optimization (TELO)

Editors-in-Chief: Jürgen Branke, Manuel López-Ibáñez

Volume 6, Issue 1

March, 2026

Forthcoming Events

EvoStar 2026 & Toulouse, France (hybrid)

Evostar conferences are being held in Toulouse, France from 8 to 10 April 2026 in hybrid mode. Read more about Evostar here.

Visit the EvoStar Conferences Webpages

GECCO 2026 @ San José, Costa Rica (hybrid)

The Genetic and Evolutionary Computation Conference

July 13 – 17, 2026

The Genetic and Evolutionary Computation Conference (GECCO) presents the latest high-quality results in genetic and evolutionary computation since 1999. Topics include: genetic algorithms, genetic programming, swarm intelligence, complex systems, evolutionary combinatorial optimization and metaheuristics, evolutionary machine learning, learning for evolutionary computation, evolutionary multiobjective optimization, evolutionary numerical optimization, neuroevolution, real-world applications, theory, benchmarking, reproducibility, hybrids and more.

Call for submissions

Parallel Problem Solving From Nature (PPSN)

August 29 – September 2, 2026

The International Conference on Parallel Problem Solving From Nature (PPSN) is a biannual open forum fostering the study of natural models, iterative optimization heuristics, machine learning, and other artificial intelligence approaches. PPSN was originally designed to bring together researchers and practitioners in the field of Natural Computing, the study of computing approaches that are gleaned from natural models. Today, the conference series has evolved and welcomes works on all types of iterative optimization heuristics. Notably, we also welcome submissions on connections between search heuristics and machine learning or other artificial intelligence approaches. Submissions covering the entire spectrum of work, ranging from rigorously derived mathematical results to carefully crafted empirical studies, are invited.

Detailed Call for Papers.

Important Dates

- Paper submission deadline: March 28, 2026 (Friday)

- Paper review due: May 11, 2026 (Monday)

- Notification of acceptance: May 22, 2026 (Friday)

- Camera-ready papers due: June 12, 2026 (Friday)

23rd Annual (2026) “Humies” Awards

For Human-Competitive Results – Produced by Genetic and Evolutionary Computation

To be held as part of the Genetic and Evolutionary Computation Conference (GECCO)

July 13-17, 2026 (Monday – Friday) San Jose, Costa Rica (Hybrid)

Detailed Call for Entries

Entries are hereby solicited for awards totaling $10,000 for human-competitive results that have been produced by any form of genetic and evolutionary computation (including, but not limited to genetic algorithms, genetic programming, evolution strategies, evolutionary programming, learning classifier systems, grammatical evolution, gene expression programming, differential evolution, genetic improvement, etc.) and that have been published in the open, reviewed literature between the deadline for the previous competition and the deadline for the current competition.

Important Dates

- Friday, May 29, 2026: Deadline for entries (consisting of one TEXT file, PDF files for one or more papers, and possible “in press” documentation). Please send entries to goodman at msu dot edu

- Friday, June 12, 2026: Finalists will be notified by e-mail

- Friday, June 26, 2026: Finalists must submit a 10-minute video or, if presenting in person, their slides, to goodman at msu dot edu.

- July 13-17, 2026 (Monday – Friday): GECCO conference (the schedule for the Humies session is not yet final, so please check the GECCO program as it is updated for the time of the Humies session). GECCO will be in hybrid mode, so the finalists may present their entry in person or on video.

- Friday, July 17, 2026: Announcement of awards at the plenary session of the GECCO conference.

About this Newsletter

SIGEVOlution is the newsletter of SIGEVO, the ACM Special Interest Group on Genetic and Evolutionary Computation. To join SIGEVO, please follow this link: [WWW].

We solicit contributions in the following categories:

Art: Are you working with Evolutionary Art? We are always looking for nice evolutionary art for the cover page of the newsletter.

Short surveys and position papers. We invite short surveys and position papers in EC and EC-related areas. We are also interested in applications of EC technologies that have solved interesting and important problems.

Software. Are you a developer of a piece of EC software, and wish to tell us about it? Then send us a short summary or a short tutorial of your software.

Lost Gems. Did you read an interesting EC paper that, in your opinion, did not receive enough attention or should be rediscovered? Then send us a page about it.

Dissertations. We invite short summaries, around a page, of theses in EC-related areas that have been recently discussed and are available online.

Meetings Reports. Did you participate in an interesting EC-related event? Would you be willing to tell us about it? Then send us a summary of the event.

Forthcoming Events. If you have an EC event you wish to announce, this is the place.

News and Announcements. Is there anything you wish to announce, such as an employment vacancy? This is the place.

Letters. If you want to ask or say something to SIGEVO members, please write us a letter!

Suggestions. If you have a suggestion about how to improve the newsletter, please send us an email.

Contributions will be reviewed by members of the newsletter board. We accept contributions in plain text, MS Word, or Latex, but do not forget to send your sources and images.

Enquiries about submissions and contributions can be emailed to gabriela.ochoa@stir.ac.uk.

All the issues of SIGEVOlution are also available online at: https://evolution.sigevo.org/.

Notice to contributing authors to SIG newsletters

As a contributing author, you retain the copyright to your article. ACM will refer all requests for republication directly to you.

By submitting your article for distribution in any newsletter of the ACM Special Interest Groups listed below, you hereby grant to ACM the following non-exclusive, perpetual, worldwide rights:

- to publish your work online or in print on the condition of acceptance by the editor

- to include the article in the ACM Digital Library and in any Digital Library-related services

- to allow users to make a personal copy of the article for noncommercial, educational, or research purposes

- to upload your video and other supplemental material to the ACM Digital Library, the ACM YouTube channel, and the SIG newsletter site.

If third-party materials were used in your published work, supplemental material, or video, make sure that you have the necessary permissions to use those third-party materials in your work.

Editor: Gabriela Ochoa

Sub-editors: James McDermott and Nadarajen Veerapen

Associate Editors: Emma Hart, Bill Langdon, Una-May O’Reilly, and Darrell Whitley